I’ve been working on data integrations in EPM Cloud Planning using Data Management. I haven’t done a lot with Data Management over the last few projects, so I had to re-learn a bit as I was configuring these integrations. Here are some things, in no particular order, that I came across to keep in mind while building integrations. Though some of these tips may seem very basic and common knowledge, I know it will help me in the future to have something to jog my memory as I’m configuring a new integration.

Concatenating Source File Columns

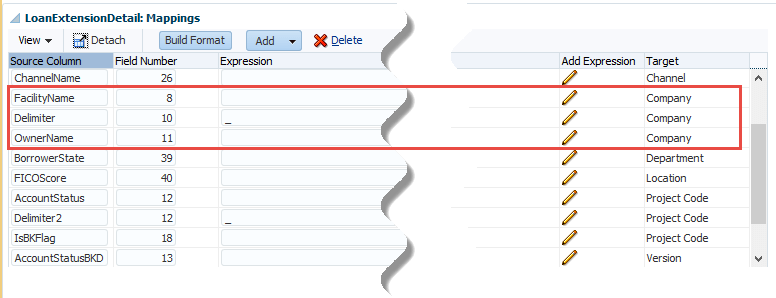

If using a delimiter between the source dimensions, remember to add that dimension row in the proper sequence. For example, to add “_” as the delimiter for concatenating columns 8 and 11 for the dimension Company…

- Add dimension row Company, field number 8

- Add dimension row Company, field number x, expression “_” (without the double quotes)

- Add dimension row Company, field number 11

The field number for the delimiter row can be 1 or greater, it cannot be zero.

Integrating with Another EPM Cloud Application

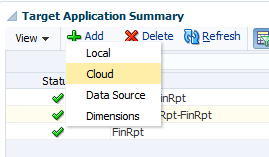

Create the Target Application as Cloud, preferably use an admin user account for the login credentials, and apply a clear and meaningful prefix to help identify the target. Remember that the login credentials password will need to be updated every 120 days per Oracle requirements. This is why it’s best to use an admin account instead of an individual user.

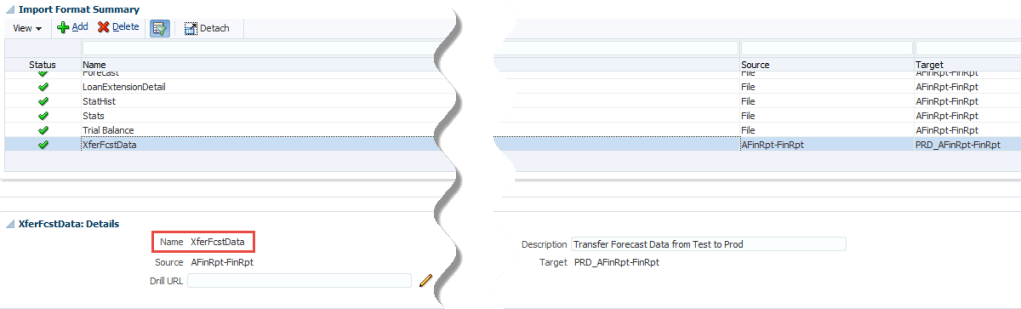

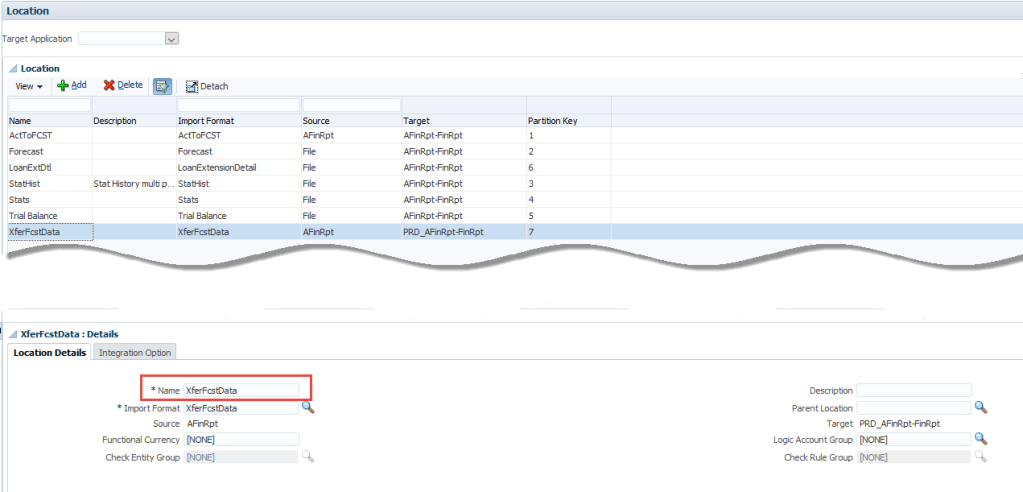

Once the Target Application is created, Import Formats and Locations can be created. I’ve found it useful to use the same name for each.

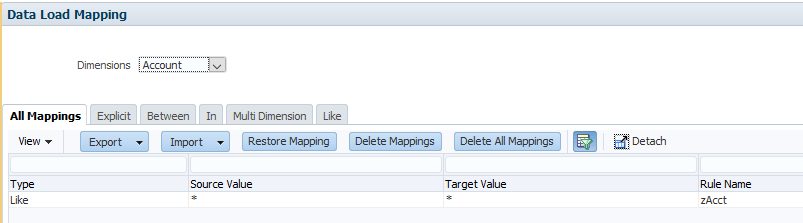

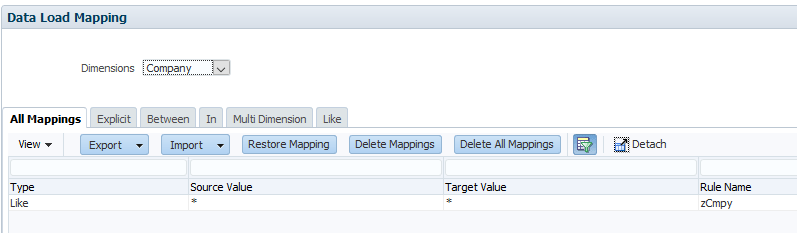

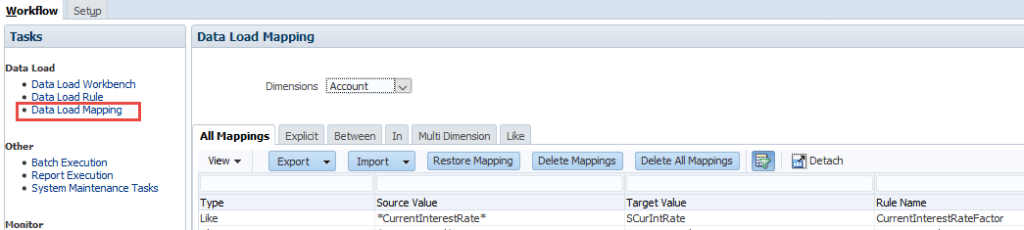

Data Load Mappings

Mappings are tied to the Location, so multiple load rules can be created that all use the same location and therefore the same mappings. This is useful when the purpose of the integration is to copy data from one scenario to another, i.e. current month actuals to a forecast scenario.

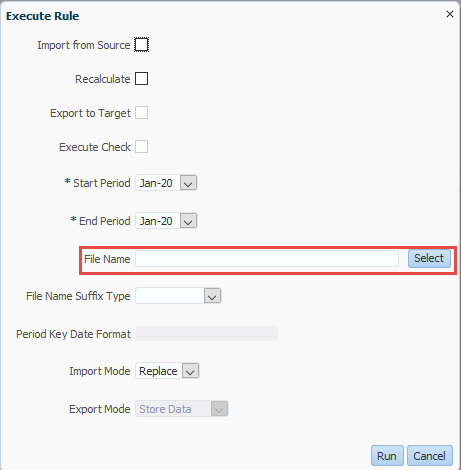

Data Load Rule File Selection

If the file to be loaded does not consistently have the same name, leave the File Name field blank. When executing the rule, select from that prompt which file to load. This can also be scripted if using EPM Automate for the data load process.

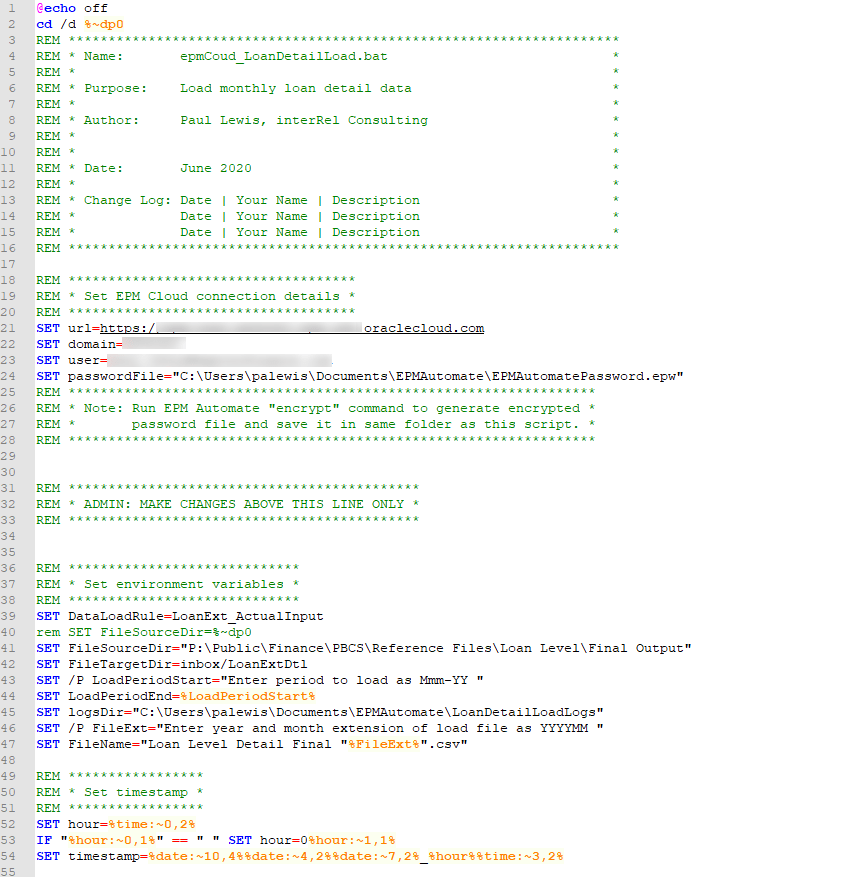

EPM Automate

When doing data loads from files, use an EPM Automate script for the whole process. Login, delete previous file, upload new file, run load rule, then logout. This makes it easier for an end user to run the loads, they don’t have to know much about Data Management nor do they have to go to the web interface to process the load.

Multi-Column File Type

One of the more challenging configurations I’ve had to do is using a source file with multiple data columns. This is handled by creating an Import Format with the file type “Multi Column – Numeric Data”. Set up the dimensions with source and target as you would for a normal file load. For the Amount column, use any number other than zero for Field Number, and then use an Expression to assign the data columns. In this example, the Account dimension is the driver, a header row is identified, members are listed as targets for the data, and the column numbers for the data are listed. The Expression wizard can also be used to create the expression. Full guidance can be found at https://docs.oracle.com/en/cloud/saas/enterprise-performance-management-common/erpia/multicolum_numload_100x55a8222f.html

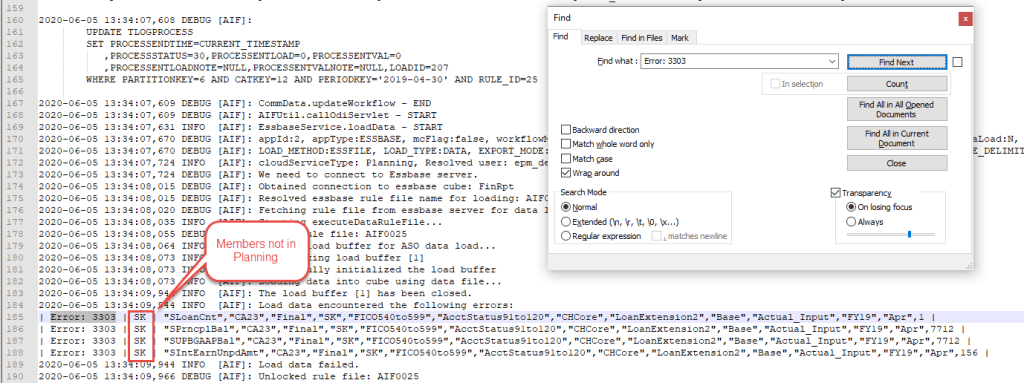

The Expression is … Driver=Account;HeaderRow=1;Member=”SLoanCnt”,”SIntEarnUnpdAmt”,”SPrncplBal”,”SBKCrmDwn”,”SRepoAdj”,”SPrncplAdj”,”SUPBGAAPBal”,”SCurIntRate”,”SRemainTermMths”;Column=3|44|45|47|48|49|50|51|52

Load Failure

When a load fails, it’s often either a validation error caused by an incorrect mapping or a new member in the source that hasn’t been added to Planning.

If a validation error, the issue can be viewed from Workbench. The Validation Errors tab will show which dimension has mapping issues.

Go to Data Load Mapping to correct the mapping.

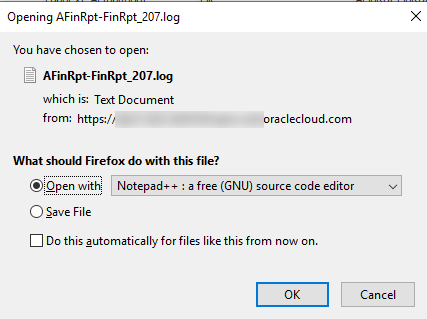

If a missing member issue, from Process Details, download the log file and open in Notepad++ or similar text editor.

Search for “Error: 3303”, this will take you to the member issue. Add the missing members into Planning and re-run the load.

That’s all I can think of at this time, I may add to this post if I come across other useful tips as I continue this integration. I may also go thru these same tasks in Data Integration to show the differences with Data Management.

Thanks Paul! Very helpful!

LikeLiked by 1 person